Global Settings

The Global Settings dialog can be opened from the gear icon in right side of the menu bar.

Apart from Personal, Global settings are available to Admin users only.

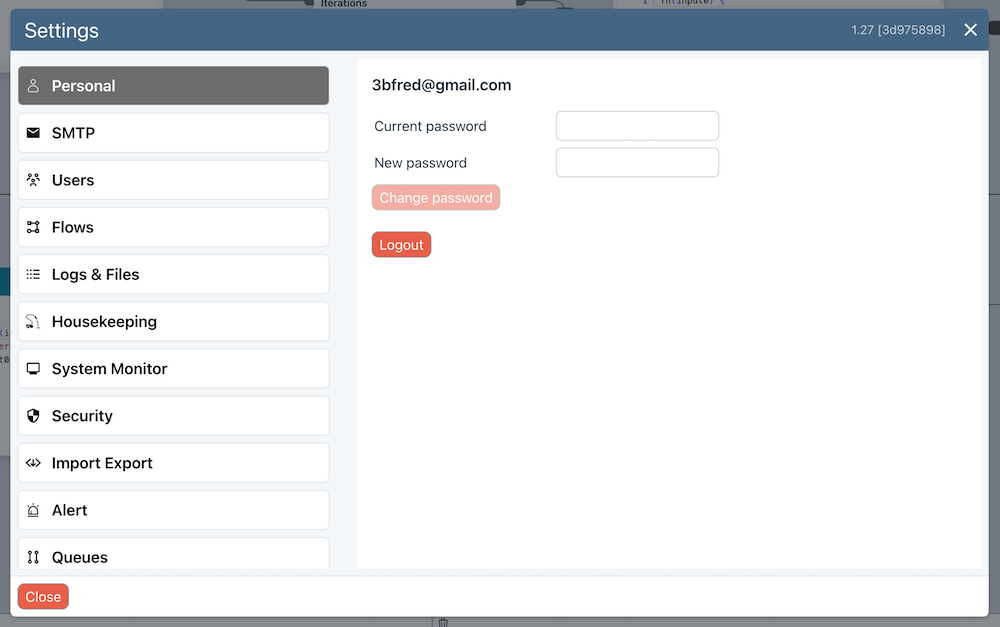

Personal

- Logout

- Change password

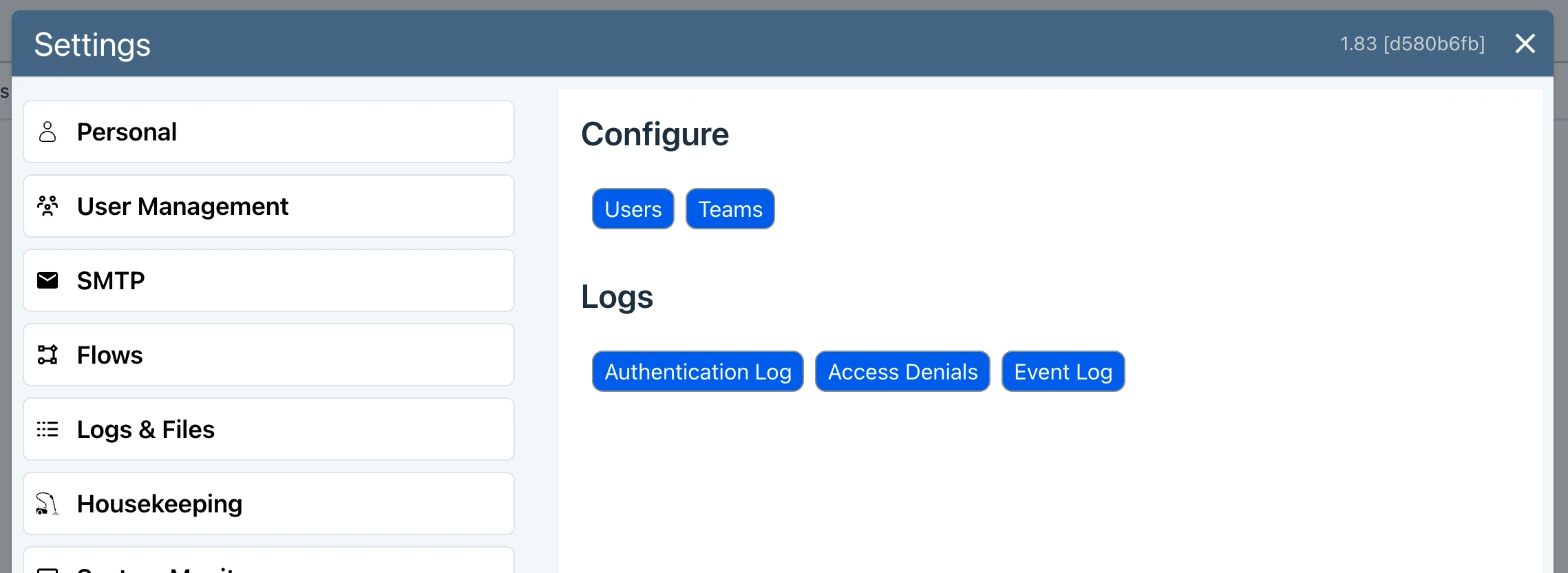

User Management

See RBAC and user Management for details on configuring Role Based Access Control.

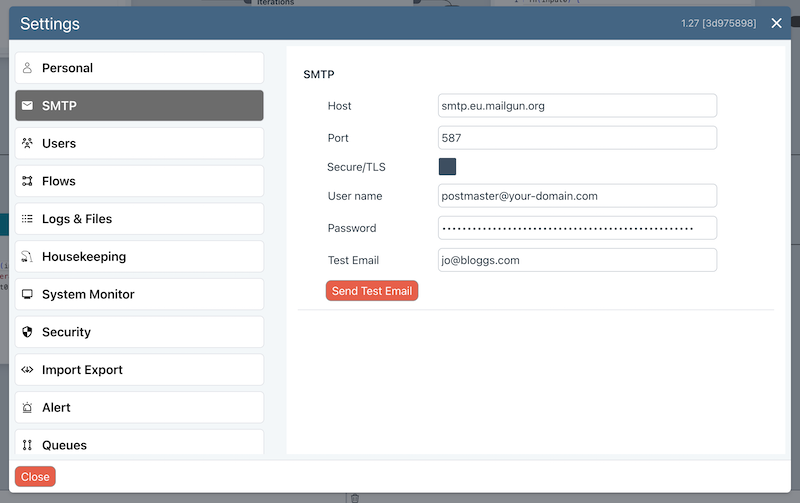

SMTP

An SMTP server is used for

- Notifying users when added, password reset emails etc.

- Sending Alerts

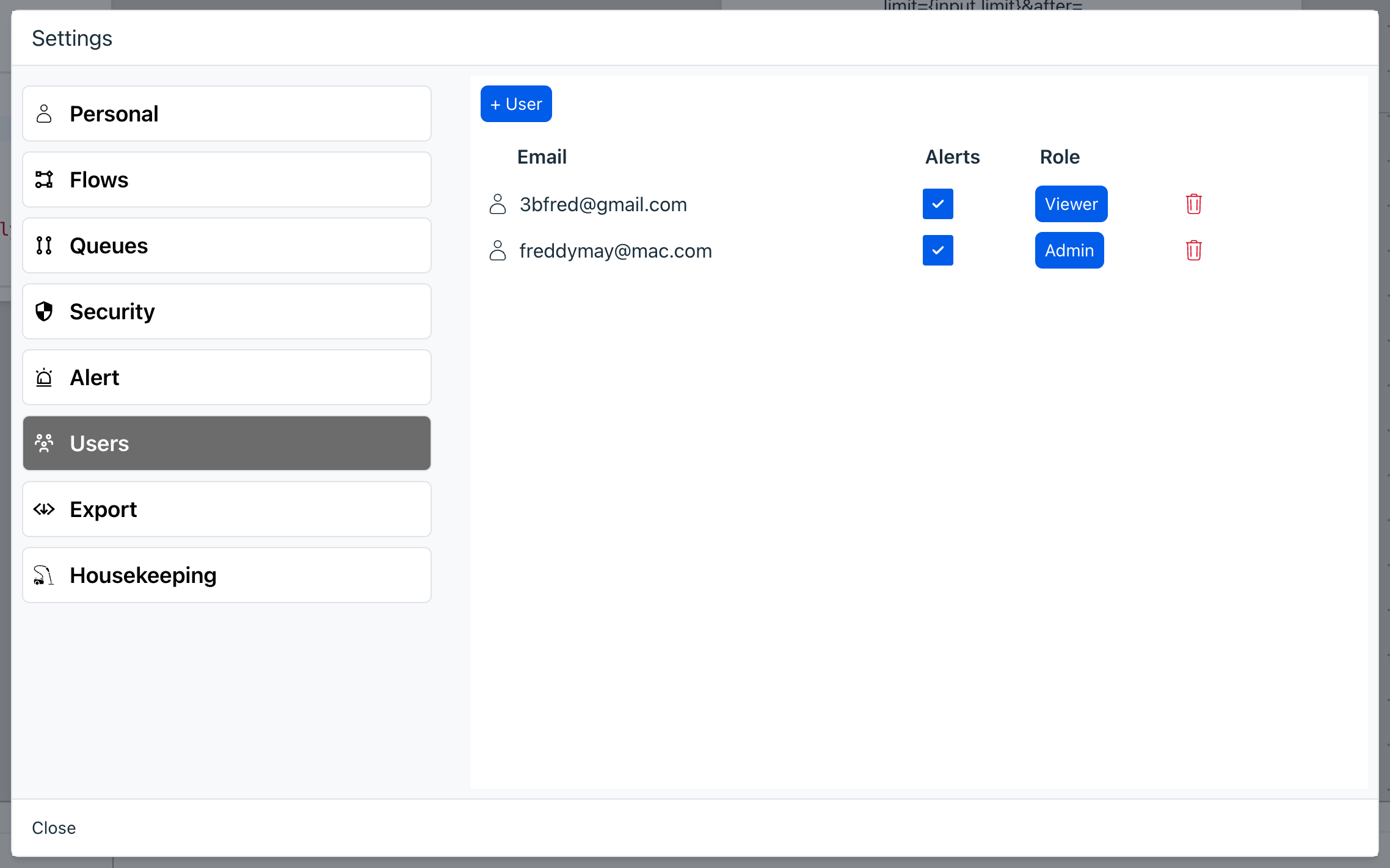

Users

Add Users, their roles and whether they should receive alerts.

Currently, user roles other than Admin do not function.

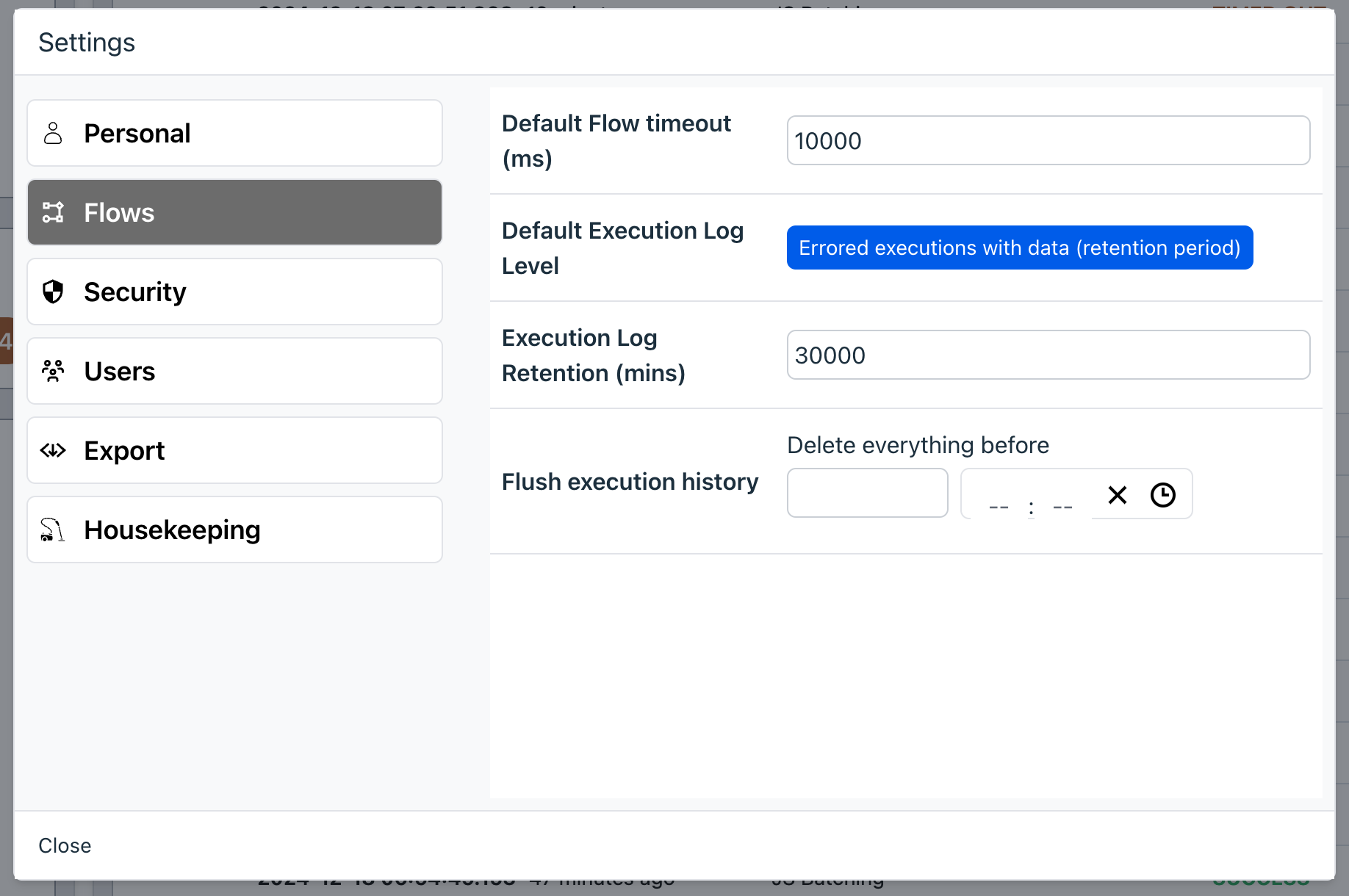

Flows

- Default Flow timeout - before Flows throw a timed out error. This can be overridden for an individual Flow in the Flow settings.

- Execution Log Retention (hours) - how long data remains in the execution history before being flushed automatically. This deletion is performed hourly. Please note that for data to be fully removed from the database a Full Vacuum or Scheduled Vacuum should be performed (see Housekeeping below).

- Successful Executions and Errored Executions - see below.

- Flush execution history - allows you to flush all execution history items before the specified date and time. You can also flush in a more selective manner from the Dashboard.

IMPORTANT - note that flushed data will remain in the database until you perform a database reorganisation. This can be done in the Housekeeping section in these Global Settings.

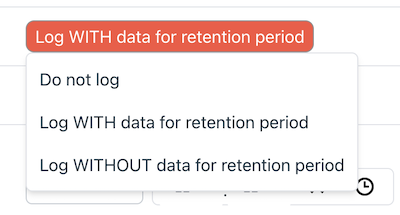

Successful Executions and Errored Executions

These provide the default approach to storing data. Ziggy gives you full control if and how execution history data is stored. This allows you to strike the correct balance between security, information and debuggability.

For both, you have the following options. See above for information about the retention period.

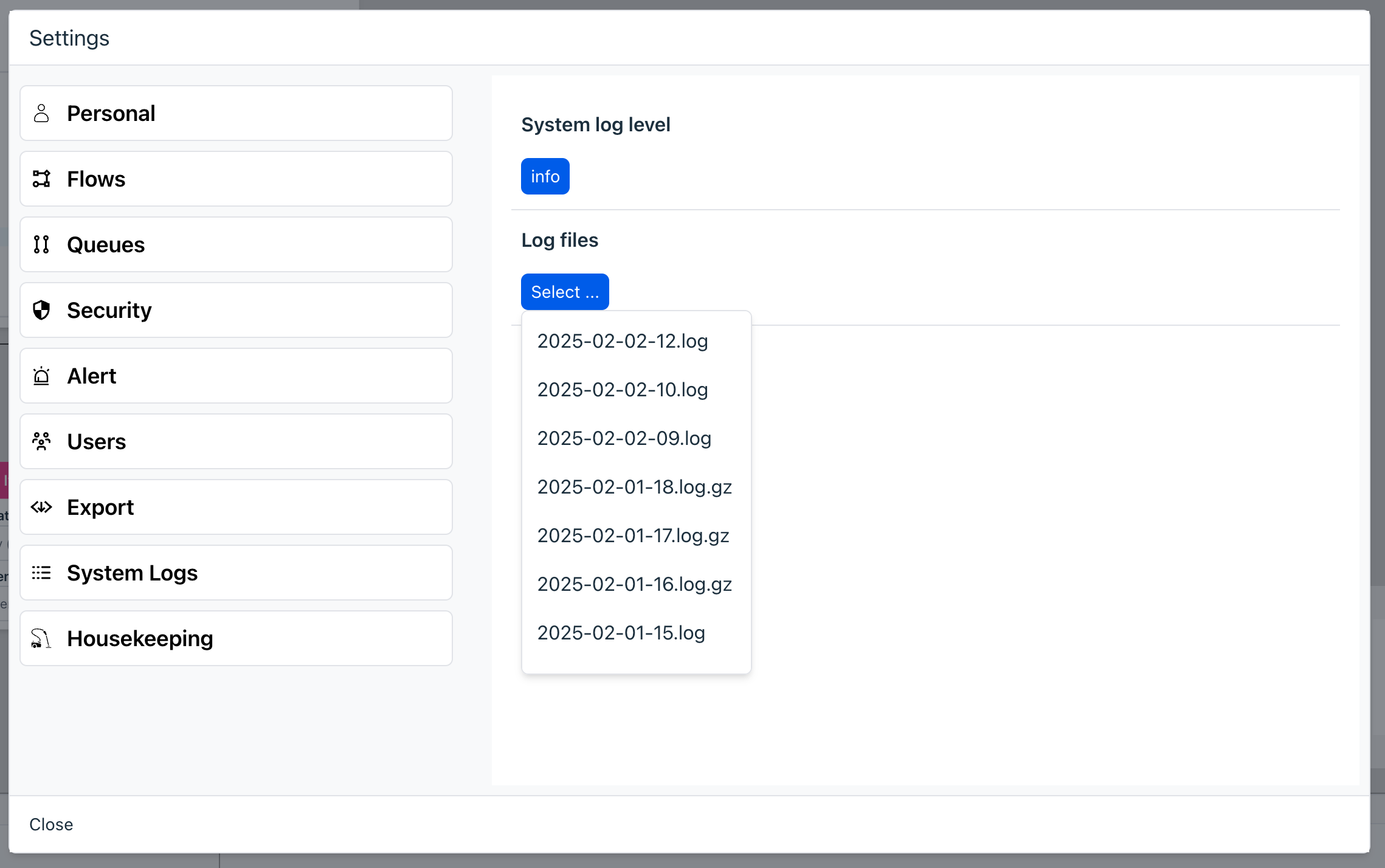

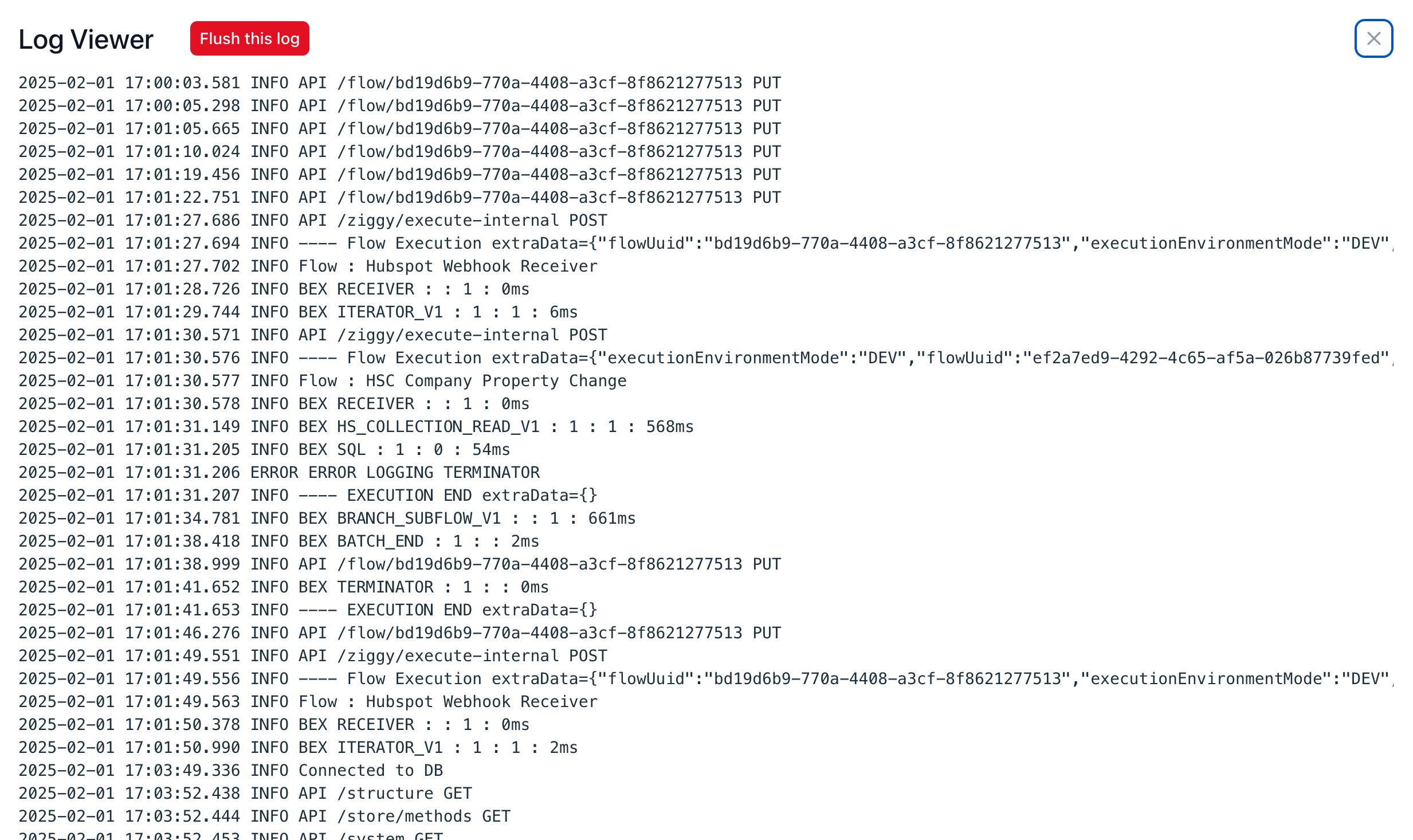

Logs & Files

This lets you view

- set the server log level (you should generally use Info)

- all system logs

- files that are written to the Ziggy file system (File Block)

- Set node and edge IDs (for low level debugging support only)

You can select a log file to view from the Log files dropdown. This will then open up a log viewer.

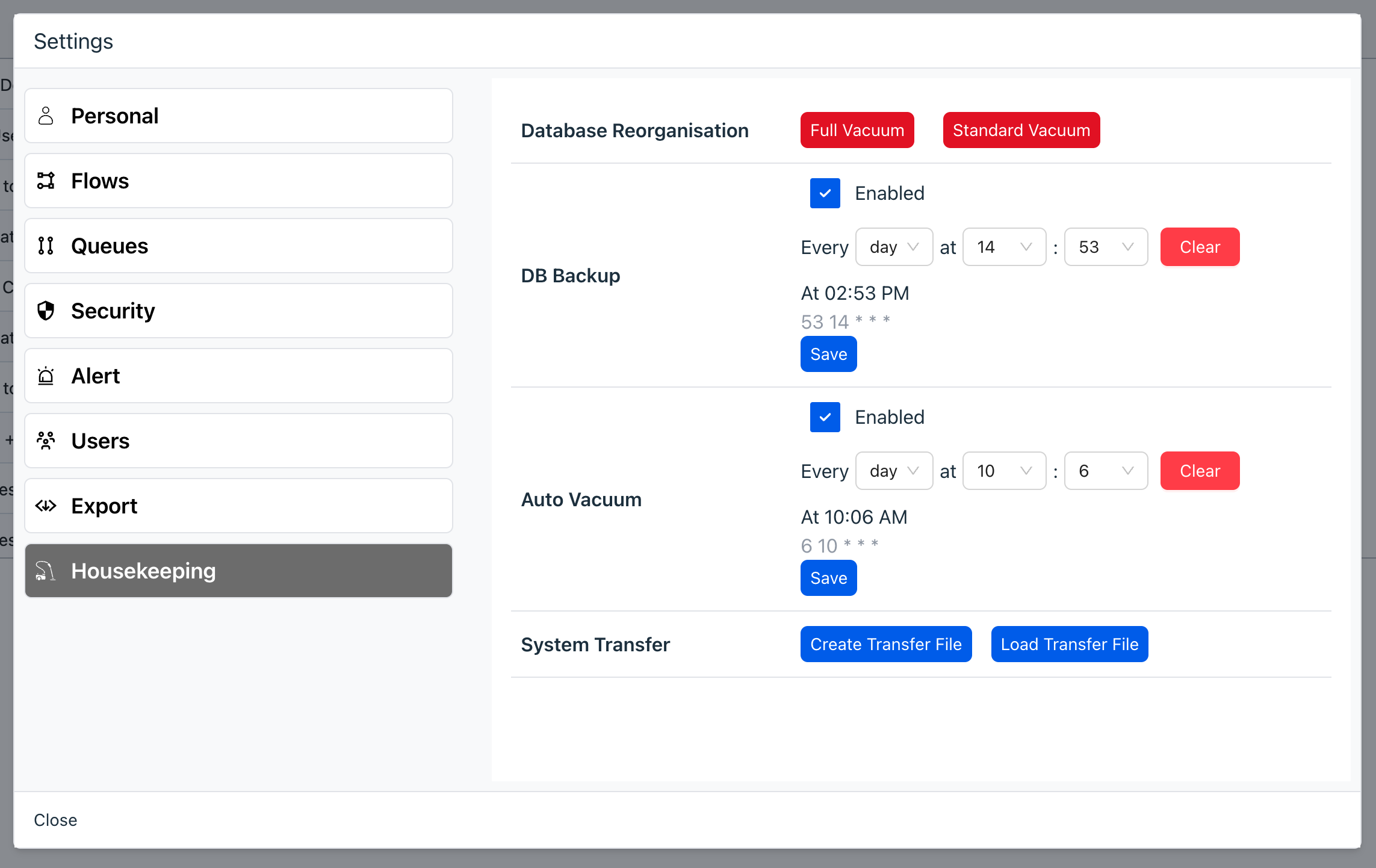

Housekeeping

Database Reorganization

When data is deleted from the database, records are flagged for deletion rather than fully deleted. As a result, the database will increase in size over time. Flow execution history uses the most database space.

Execution history items are automatically deleted as per the Execution Log Retention (hours) setting in Flows settings section. The higher this value, the larger your database size and the longer reorganization will take. 48 to 168 hours is typical as you will usually be interested in failed executions that happen in the short term.

The Reorganize button performs a database vacuum that fully deletes all records in all tables that are flagged for deletion.

Vacuuming will slow the database down, so it is best done when load is at its lowest.

Note the Auto Vacuum settings that perform this automatically on a schedule you specify.

DB Backup

You should always create a database backup schedule.

The backup is created on the server itself, so if you lose your storage system, you will lose not only the database (if it is configured to run on the server, which is the default), but also the backup.

For this reason, you are advised to use the Remote backup option.

- Create a Connection for either S3 or Azure.

- Specify the bucket name

- Test it - it will create a test file called

Test-Remote.txt. If this file is created, then run a manual backup by pressing the Backup Now button and check that the file is created.

Whenever the backup is performed (whether manually or scheduled) the backup should be copied to the remote storage.

You could also set up customer scripts on the server to perform other remote backup configurations. The backup folder is in

Auto Vacuum

Performs a database reorganization at the specifeid time(s). It is suggested you do this daily at a time when load is lowest as it will slow database performance while it runs.

System Transfer

Create Transfer File - creates a database backup that is then automatically downloaded to your computer. If you do nothing else then this is effectively a local backup.

Load Transfer File - lets choose and load a Transfer File that you created using the above step and then load it into the current Ziggy system you are working with. The database is completely replaced and the existing database is backed up but no longer online.

Important : you should always create a backup or a Transfer File on the system you are about to load the remote Transfer File INTO. The transfer process does also retain the database, which is named ziggy-pre-transfer-backup-timestamp.sql.gz.

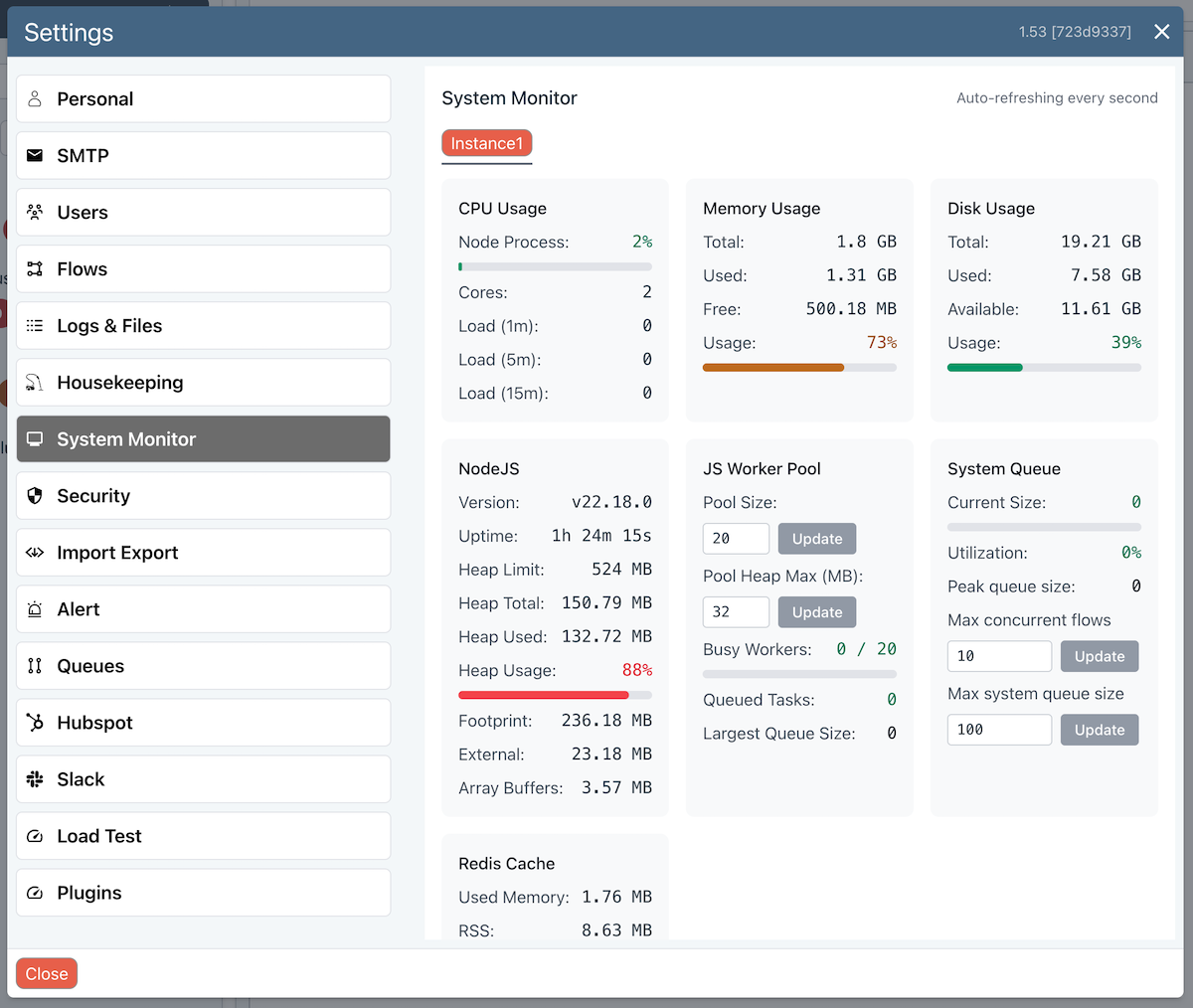

System Monitor

This lets you monitor all important aspects of your Ziggy server.

This screen lets you temporarily adjust the Javascript Worker Pool and heap sizes until your server restarts.

Refer to Performance Tuning for details.

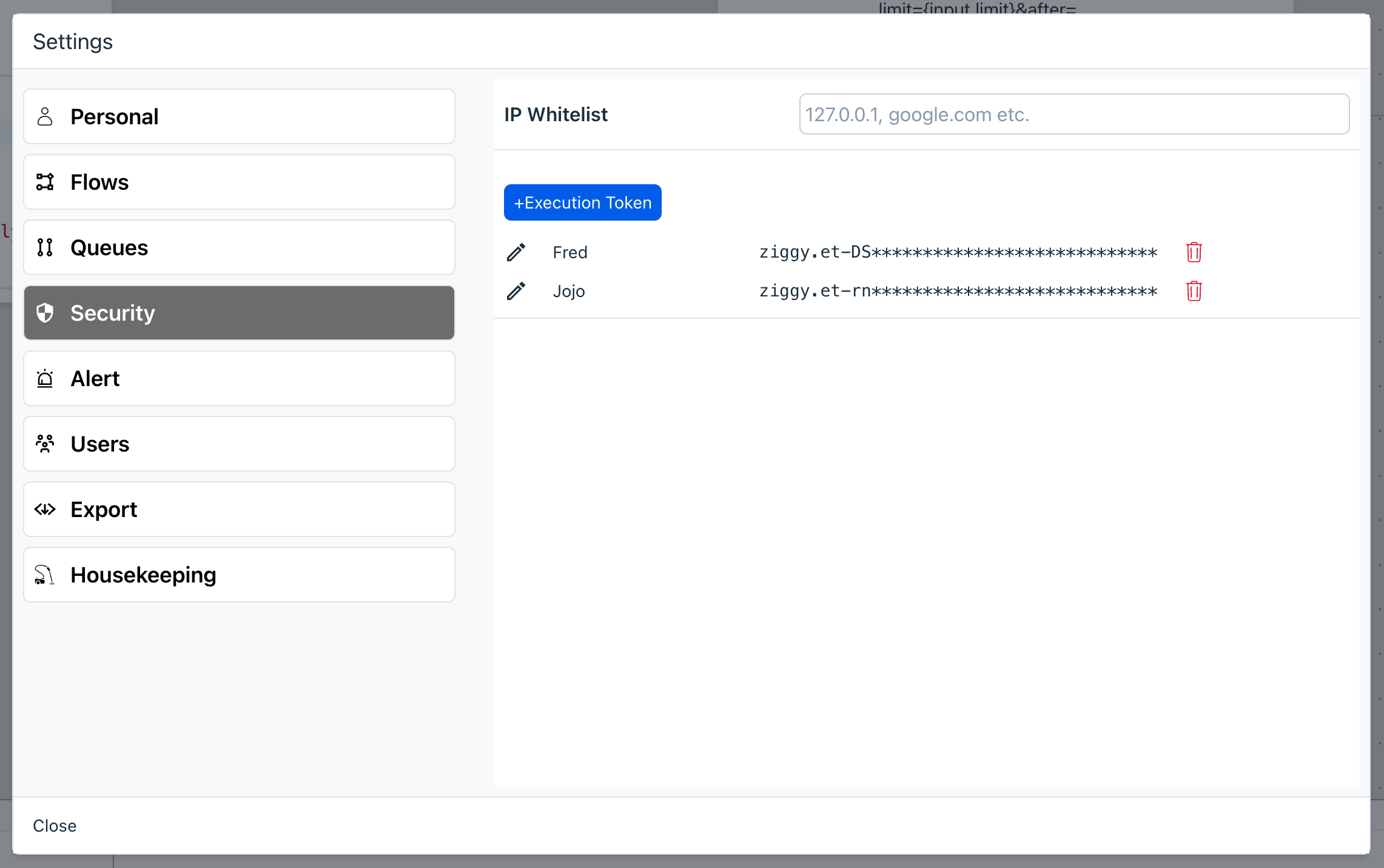

Security

External execution tokens

A token must be used when external systems call the Ziggy API to launch a flow.

- +Execution token - this will generate a new random token. You will be shown it one time only.

- Rename token - if, for example, you want to assign tokens to an individual or group, you can rename the token to make it clear who it belongs to. This is useful when revoking a token.

- Revoke token - press the trash icon to revoke the token. Once revoked, it cannot be restored.

Import/Export

This exports a JSON representation of the system (except for execution history). Secrets are exported without exposed values.

This is in preparation for a corresponding import feature that will let you selectively import entities into a new Ziggy instance.

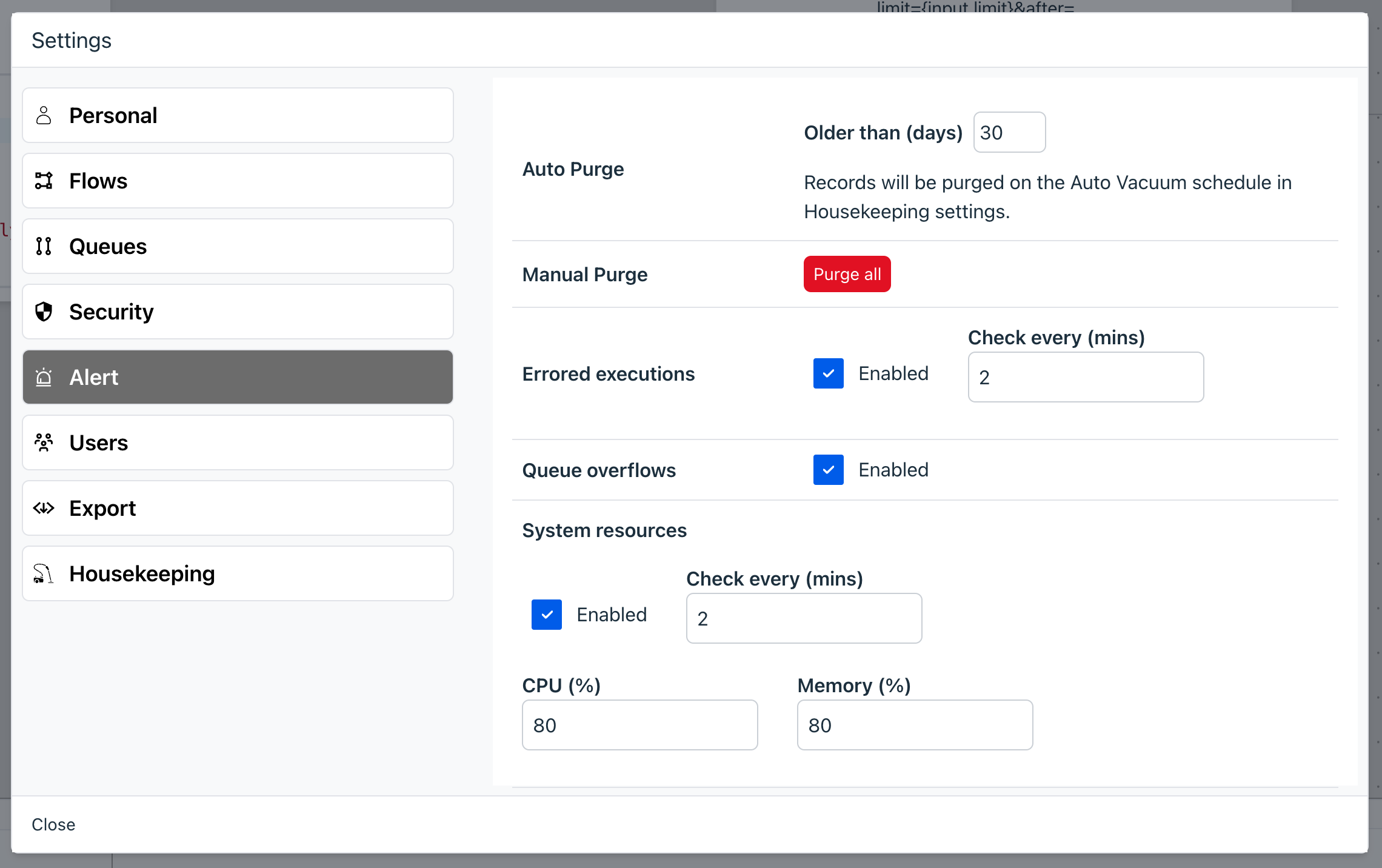

Alerts

This lets set alert options and thresholds. Please read the Alerts topic for full details on alerts and the alert log.

DB Backup

You can specify a schedule for backing up the database.

The backup files will be created in the volume-data/backups folder and will be of the format

ziggy_dump_20250118_133746.dump.

You should configure you docker-compose.yaml file to persist to some location in the host. You

might want to set up some extra automation to copy these backups to an S3 Bucket or other location

in case your host dies and backup data is lost.

Auto Vacuum

You can specify a schedule for performing a Full Vacuum on the database. This can be useful for the following reasons.

- Keep your database optimised for performance reasons.

- Security - if you have configured the Execution History to store errored or full execution history, this will ensure data is fully removed from the database.

Important - the Scheduled Vacuum will block the database, so it should be performed at times of no activity. You should also perform this operation regularly so the amount of deletion and reorganisation required does not accumulate and therefore takes less time.

Auto deletion based on Retention Time

Above, in the Flows section, you can specify that Execution History data is automatically deleted after a specified number of hours. Not that this will flag such records for deletion but not actually remove them from the database. A Full Vacuum or a Scheduled Vacuum will ensure that data is fully removed.

System Transfer

This lets you transfer your entire database to another Ziggy instance.

- Create Transfer File generates the file to be transferred.

- Load Transfer File restores this into the target Ziggy instance.

Please refer to Transferring Data for more information.

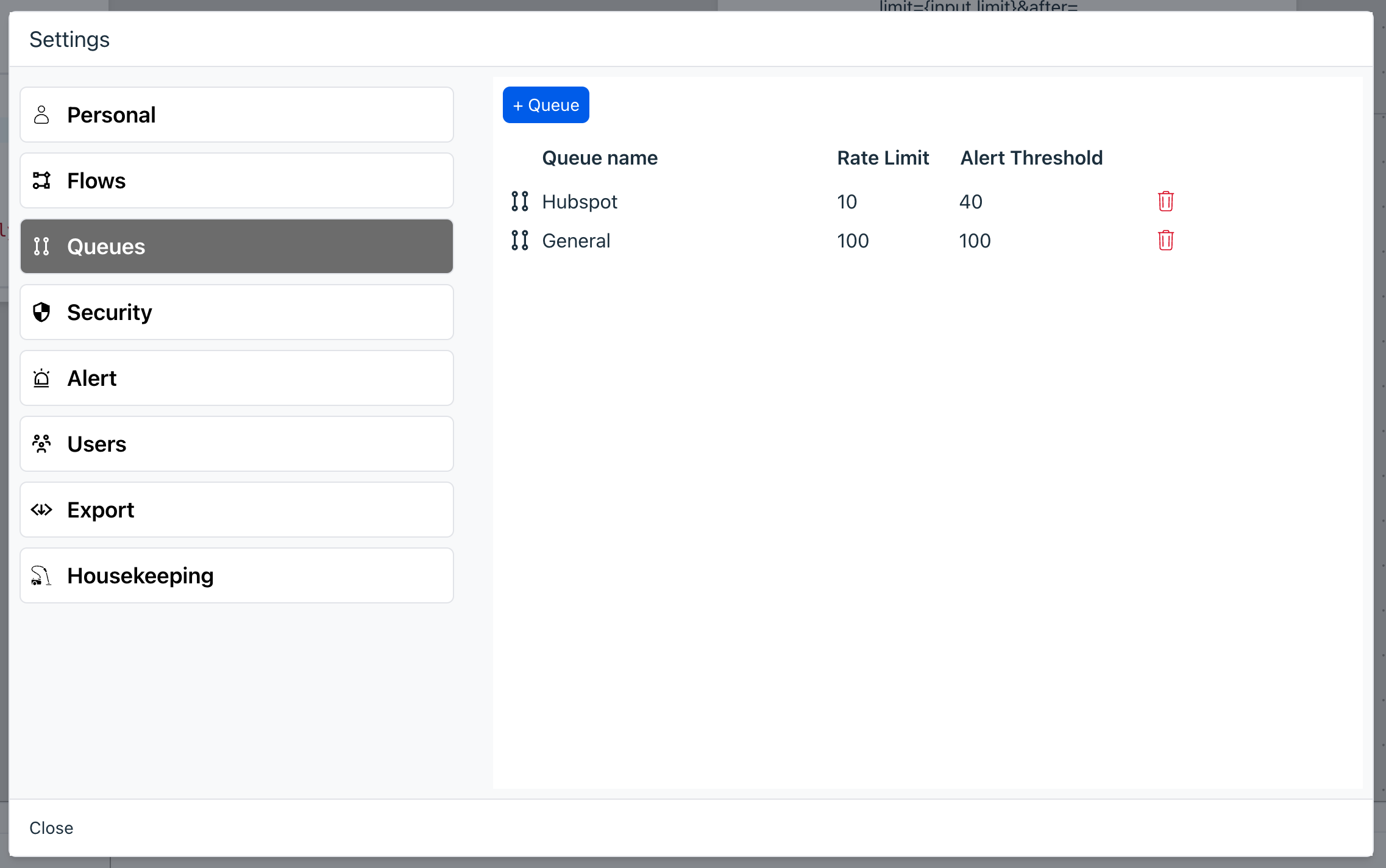

Queues

Manage your User Defined Queues, which are used for generalised queueing.

- Add a Queue - you can queue Flow execution by selecting the Queue in the Receiver Block.

- Rate limit - this will ensure that Flows are executed according to a per second rate limit value you specify here. This feature works across all Flows that use the same Queue ensuring you never exceed the target API's ceiling value.

- Overflow threshold - if the queue size ever exceeds this value then data is queued in the database (rather than in memory). Alerts are also sent.

- Delete queue - the delete icon lets you delete a Flow. You should be careful when deleting a queue as any Flow using this Queue will fail.

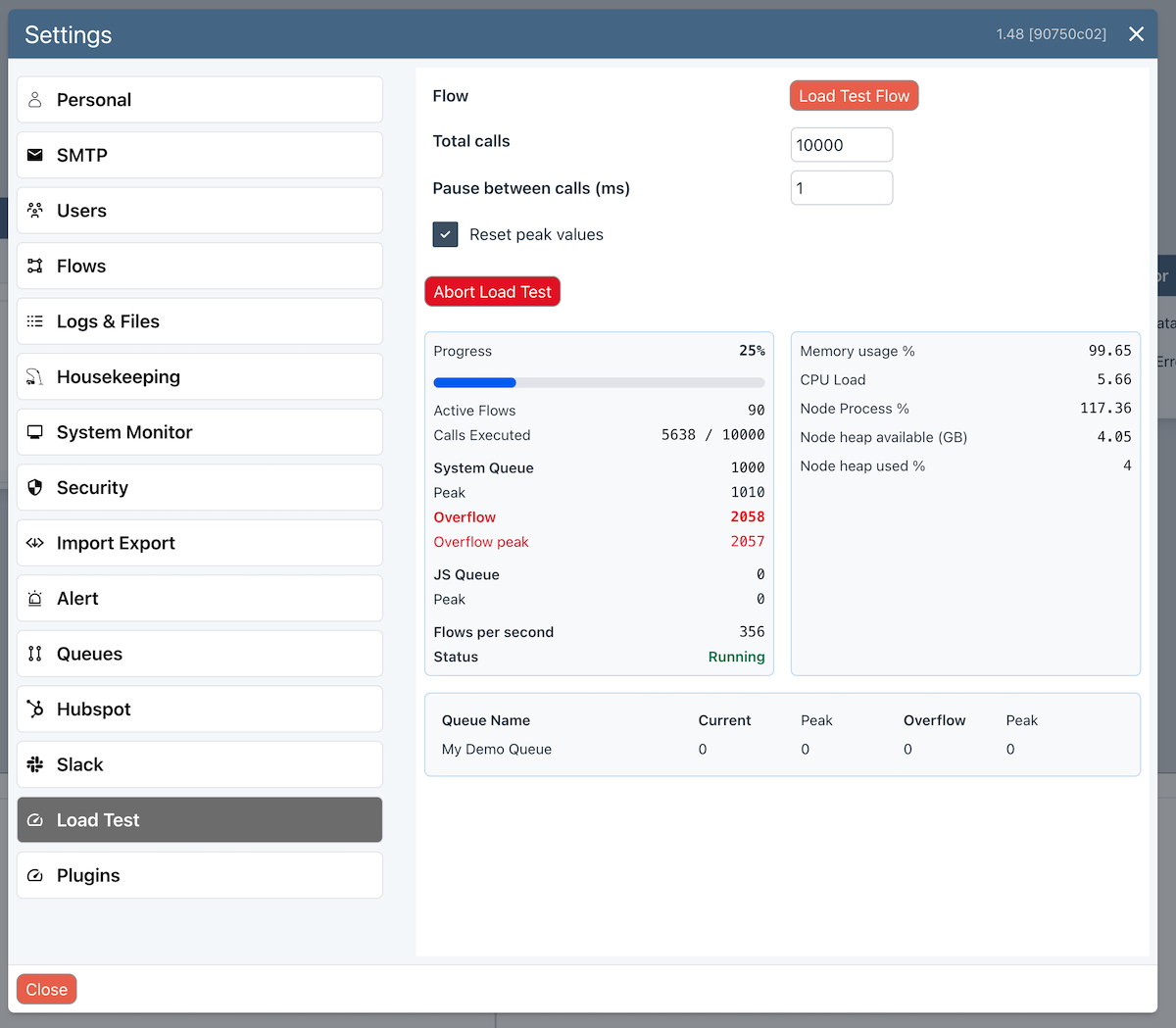

Load Test

If you are launching Flows from external API calls, then the load test can simulate the conditions you expect in real-life.

To help you with performance tuning, we provide a Load Testing option that lets you run large number of flows at any specified rate. The test results show clearly how key indicators are effected.

You can adjust Max Concurrent Flows, System Queue Size and Javascript Worker Pool size in the System Monitor.

You should also be aware that if you are using User Queues for rate limiting, this can significantly affect performance and adjusting the above values will not improve performance if your Flow is being rate limited by your queue.

Settings are as follows

- Flow - the flow to test with.

- Total Calls - total number of Flows to execute.

- Pause between calls - how long to wait between each flow execution call. 1 ms is the heaviest load possible.

- Reset peak values - resets the peak queue values before running the test.